Open source AI didn't die. It ran out of sponsors.

I've been running Qwen 3.5 locally on my laptop for eight months.

Good enough for agentic coding. Small enough to own outright. Never phones home.

Last week, Alibaba closed the version I would have upgraded to.

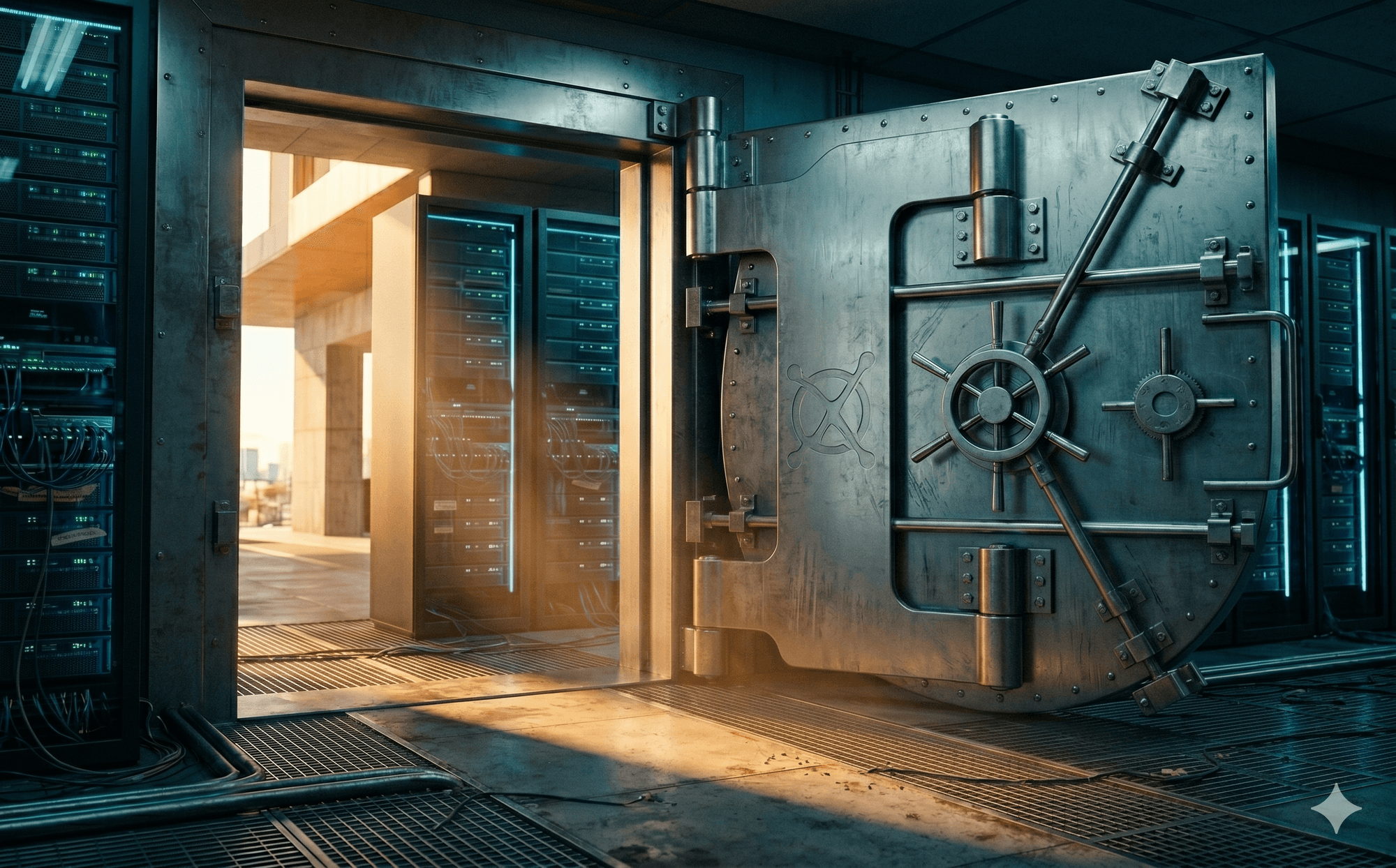

And for the first time since I started running local models, I watched a frontier release ship as an API key instead of a download link — and felt the door close behind it.

This is the post about that door.

Alibaba just closed their best model.

— George Pu (@TheGeorgePu) April 19, 2026

Qwen 3.6 Plus - the version that rivals Claude for agentic coding - is now API only.

The smaller 3.6 is still free.

China just joined the same split.

Free at the base. Paid at the frontier.

OpenAI. Anthropic. Google. Now Alibaba.

I've…

The week open source broke

April 2, 2026.

Alibaba launched Qwen 3.6 Plus — their frontier agentic coding model, the one that rivals Claude.

Closed source. API only. Bloomberg framed it in five words: "focus on profit."

Two days earlier and one day later.

Alibaba also closed Qwen 3.6 Max and Qwen 3.6 Vision.

Three frontier models in three days from the company that used to be the open source champion of the East. Zero of them downloadable.

April 15, 2026.

Alibaba killed the free tier of Qwen Code.

The GitHub notice was four lines: "Qwen OAuth free tier has been discontinued." Pro plan: $50 a month. Free quota cut from 1,000 requests to 100.

Sounds Similar?

Same playbook Anthropic shipped twelve months ago with Claude Code.

Same pricing. Same math. Different logo.

The smaller Qwen3.6-35B-A3B still ships on Hugging Face under Apache 2.0.

It's genuinely good. It's also a generation behind the Plus model and will stay that way.

Here's what everyone keeps missing: Open source AI didn't lose a war. It ran out of sponsors.

The patrons went broke, got disgraced, or cashed out. What you're watching isn't ideology shifting. It's a P&L.

Act 1: The Llama 3 inflection

July 2024. Zuckerberg releases Llama 3.1 405B and publishes a manifesto called Open Source AI Is the Path Forward.

His exact words:

"I believe the Llama 3.1 release will be an inflection point in the industry where most developers begin to primarily use open source."

He was right. For eighteen months.

The entire Chinese open source ecosystem is downstream of Llama.

DeepSeek-R1-Distill-Llama runs on Llama 3.1 and 3.3 bases.

Qwen-2.5 borrowed the architecture.

When DeepSeek, Mistral, and Qwen caught up to GPT-4o in open weights, it was because Meta had made it cheap to catch up.

Zuck was the sponsor.

He had a trillion-dollar market cap to burn and a strategic reason to burn it: if AI is free, the distribution moats — Instagram, WhatsApp, Facebook — keep their value. S

ubsidize the commodity, protect the monopoly.

It's a good strategy. Right up until the commodity embarrasses you.

Act 2: The Llama 4 collapse

April 5, 2025. Meta ships Llama 4.

The Reddit verdict landed within 48 hours: underwhelming on coding, worse than DeepSeek and Qwen on the tasks that actually matter.

Then the benchmark scandal.

Meta had quietly used a special "experimental chat version" to juice LM Arena scores — a version that never shipped to the public.

The model people actually downloaded performed materially worse than the one Meta put on the leaderboard.

January 2, 2026. Yann LeCun, on his way out the door after a decade as Meta's Chief AI Scientist, confirms it in a public interview:

"Results were fudged a little bit."

The team, he said, had:

"used different models for different benchmarks to give better results."

Coming from the most senior AI scientist in Meta's history — someone with no obvious reason to burn his former employer on the way out — the admission was definitive.

That quote ended the open source era at Meta.

Behemoth got shelved.

The next-generation model got codenamed Avocado and delayed.

December 2025, Meta announced Muse Spark — their first frontier-class release not shipped as open weights.

VentureBeat asked if future Llama models would stay open.

Meta refused to commit.

They would only guarantee that existing models remained downloadable.

I noticed this personally.

When Llama 4 shipped I briefly tested it as a Qwen backup for my local stack. I deleted it within a week.

That was the last time I downloaded anything from Meta.

I don't expect to download another.

The sponsor stopped paying.

Act 3: The balance sheet hits Alibaba

For a moment it looked like Alibaba would pick up the torch Meta dropped.

Qwen 3 in mid-2025 was arguably the best open weights model in the world.

Justin Lin's team had genuine autonomy.

The Tongyi Lab culture was closer to DeepMind than to a typical Alibaba cost center.

Then the balance sheet arrived.

Alibaba's Q3 2026 earnings: net income down 67% to $2.4 billion.

Missed analyst estimates on both revenue and EPS.

Stock down 13% in March. Max drawdown of 37% by April 7.

You cannot run a loss-leader open source strategy when your operating income is down two-thirds and your stock is down a third.

Someone at the board level did the math.

The brain drain followed the money.

Three of the top five people on the Qwen team left in one quarter:

- Binyuan Hui (technical lead, Qwen Code) to Meta;

- Justin Lin (the lead who took Qwen from lab project to 600 million downloads) to undisclosed;

- Yu Bowen (head of post-training) gone.

The official line: "dismantling vertical silos."

The reality: end-to-end open source teams don't survive when the company reorganizes around monetizable verticals.

Three closed models in three days. Free tier killed two weeks later. Top of org chart gone. Bloomberg's headline told you everything: focus on profit.

The asymmetry

Now look at the other side.

Anthropic closed a $30 billion Series G in February 2026 at a $380 billion post-money valuation.

Two months later, VCs started offering preemptive rounds at $800 billion. Anthropic turned them down.

OpenAI sits at $500 billion and is pursuing another $100 billion round. Claude Code alone is doing $2.5 billion in annual run-rate revenue. Both companies are prepping 2026 IPOs.

Here's the scoreboard:

Anthropic + OpenAI:

$1.3 trillion combined. $50B+ in cash. Zero pressure to monetize fast.

Want the full playbook? I wrote a free 350+ page book on building without VC.

Read the free book·Online, free

Alibaba:

Operating income down 67%. Stock down 37%. Explicit board pressure to monetize AI immediately.

Meta:

Public benchmark scandal. Senior AI leadership gone. Pivoted closed.

The sponsors of open source are either broke, disgraced, or no longer interested.

The frontier players have the deepest pockets in tech history and no reason to share.

The four-player equilibrium

Here's the structural point almost no one is naming.

Every frontier lab has now converged on the same product split:

- OpenAI: GPT (closed, paid) + gpt-oss (open, base).

- Google: Gemini (closed, paid) + Gemma (open, base).

- Meta: Muse Spark (closed, paid) + legacy Llama (open, freezing).

- Alibaba: Qwen 3.6 Plus (closed, paid) + Qwen3.6-35B-A3B (open, base).

Four for four. This is not a coincidence. It's an equilibrium, and the forces holding it in place are stronger than any individual company's ideology.

Why the split is stable:

The frontier funds itself.

Training a frontier model now costs $500M+ and the curve is still climbing.

No one on earth will pay that if the weights ship for free the next day.

The frontier has to be closed or it doesn't get built.

The base drives distribution.

A free mid-size model gets you on every developer's laptop, in every university course, in every third-world deployment that can't afford an API bill.

That's mindshare insurance.

Every frontier lab wants a base-tier presence the way every luxury brand wants a diffusion line.

Open weights as talent magnet.

Publishing Gemma and gpt-oss keeps Google and OpenAI credible inside the research community they're recruiting from.

The base tier is a hiring budget line disguised as a release.

The middle collapsed.

There's no "open frontier" anymore because the economics don't support it.

Either you're spending $500M+ to compete (closed) or you're riding one generation behind on donated compute (open, base).

The middle — open-weight models at the actual frontier — has no business model.

What would break the equilibrium?

Only three things:

- frontier training costs collapse by 10x, making the donation affordable again;

- a state actor — China most plausibly — decides open weights are a geopolitical weapon and subsidizes them at scale for a decade;

- a cartel forms where multiple labs co-fund open frontier releases as a competitive lever against the leader.

None of these are happening in 2026.

The first requires a research breakthrough.

The second requires political will that hasn't materialized.

The third requires trust between competitors who are prepping IPOs.

Until one of those happens, every frontier player will sit exactly where Alibaba just landed: free at the base, paid at the frontier.

Counter-arguments worth taking seriously

I'm going to steelman the case against what I just wrote.

"Mistral is still shipping. DeepSeek V4 is coming. The door isn't closed."

True, for now. But look at who's paying.

Mistral is bankrolled by the French state as an explicit sovereignty play against American AI.

DeepSeek is funded by High-Flyer, a Chinese quant hedge fund whose founder treats open weights as personal reputational capital.

These aren't businesses. They're subsidies and donations.

The minute French political priorities shift or High-Flyer's founder retires, both disappear.

A business model that depends on one government and one billionaire is not a business model.

"Training costs will fall. The frontier becomes affordable."

Maybe. But every cost reduction in training has historically been absorbed by scaling up model size, not by democratizing access.

The frontier has gotten more expensive every year despite per-FLOP costs falling every year. Betting on this reversing is betting against ten years of precedent.

"China has strategic reasons to keep weights open."

The strongest argument. But Alibaba just demonstrated that Chinese companies don't have strategic reasons — they have shareholders.

The Chinese state might fund open weights through a national champion, but a state-funded national AI lab is a very different beast than Alibaba was in 2025.

It'll be smaller, slower, more politically captured, and released on a different timeline than the commercial frontier.

I could be wrong on any of these. But I'd have to be wrong on all three for the equilibrium to break. That's the bet you're making if you plan your infrastructure around an open frontier existing in 2028.

What you own vs. what you rent

The weights I downloaded in 2024 still work today.

The weights you download today will still work tomorrow.

That is the entire point of owning rather than renting.

The open tier is still useful. Qwen3.6-35B-A3B on a single GPU is genuinely good at agentic coding.

Llama 3.1 405B still runs inference at half the cost of closed models. For 80% of what I do locally — code completion, agent loops, document parsing — the base tier is enough and will remain enough.

But the base tier is now a permanently second-tier product, released by companies whose monetization plan depends on you eventually upgrading.

That's the deal. Take it with open eyes.

Here's what I'm doing about it, concretely.

Since the Alibaba news broke I've been downloading aggressively.

Not because I need every model today, but because the cost of having the weights on disk in 2028 when I need them is effectively zero, and the cost of not having them is "pay whatever the incumbent is charging that year."

The practical kit:

- Storage:

- 2TB external SSD ($150).

- Holds roughly every usable open frontier model shipped between 2024 and 2026.

- Compute:

- A single machine with 48GB+ VRAM runs Qwen3.6-35B-A3B and most open DeepSeek variants.

- I'm on an M3 Max MacBook for mobile inference and a Linux box with an H100 for heavier work.

- Total under $10K for a setup that replicates 2025's frontier permanently.

- The download list, in priority order:

- Qwen3.6-35B-A3B (current best open agentic coding), DeepSeek-V3 and R1 (reasoning), Llama 3.1 405B (still the best open base for fine-tuning),

- Mistral Large 2, gpt-oss-120b, Gemma 2 27B.

- Every one of these is currently on Hugging Face. Every one of these could be delisted or deprecated on any given Tuesday.

The weights don't expire. The releases do.

Alibaba will keep shipping open mid-size models — for now. Meta says the same — for now. DeepSeek still ships — because a hedge fund is paying.

None of these "for nows" are guaranteed past the next earnings season. When they stop, you cannot go back and download.

The frontier models of this era exist as files you can own, permanently, right now.

In five years you will either own them or you will pay an API bill to whatever the incumbents choose to sell you.

This is not a prediction. It's arithmetic.

Anthropic at $380 billion rejecting $800 billion offers doesn't have to share. Alibaba at -67% net income can't afford to. The sponsors are tapped out.

Open source AI wins — right up until the moment someone has to pay the bill.

Download now or subscribe forever.