TL;DR:

- There are dozens of open-source AI models and new ones drop weekly.

- Most comparison guides are benchmark tables that mean nothing to business leaders.

- Here's how we actually chose — why we went with a smaller, "less impressive" model over the flashy options, and why that turned out to be the right call.

In my last post, I wrote about self-hosting our own AI for $700 a month. A lot of people asked the same follow-up: how did you pick the model?

Honestly, this was the hardest part of the whole project. Not the setup. Not the hosting. The choosing.

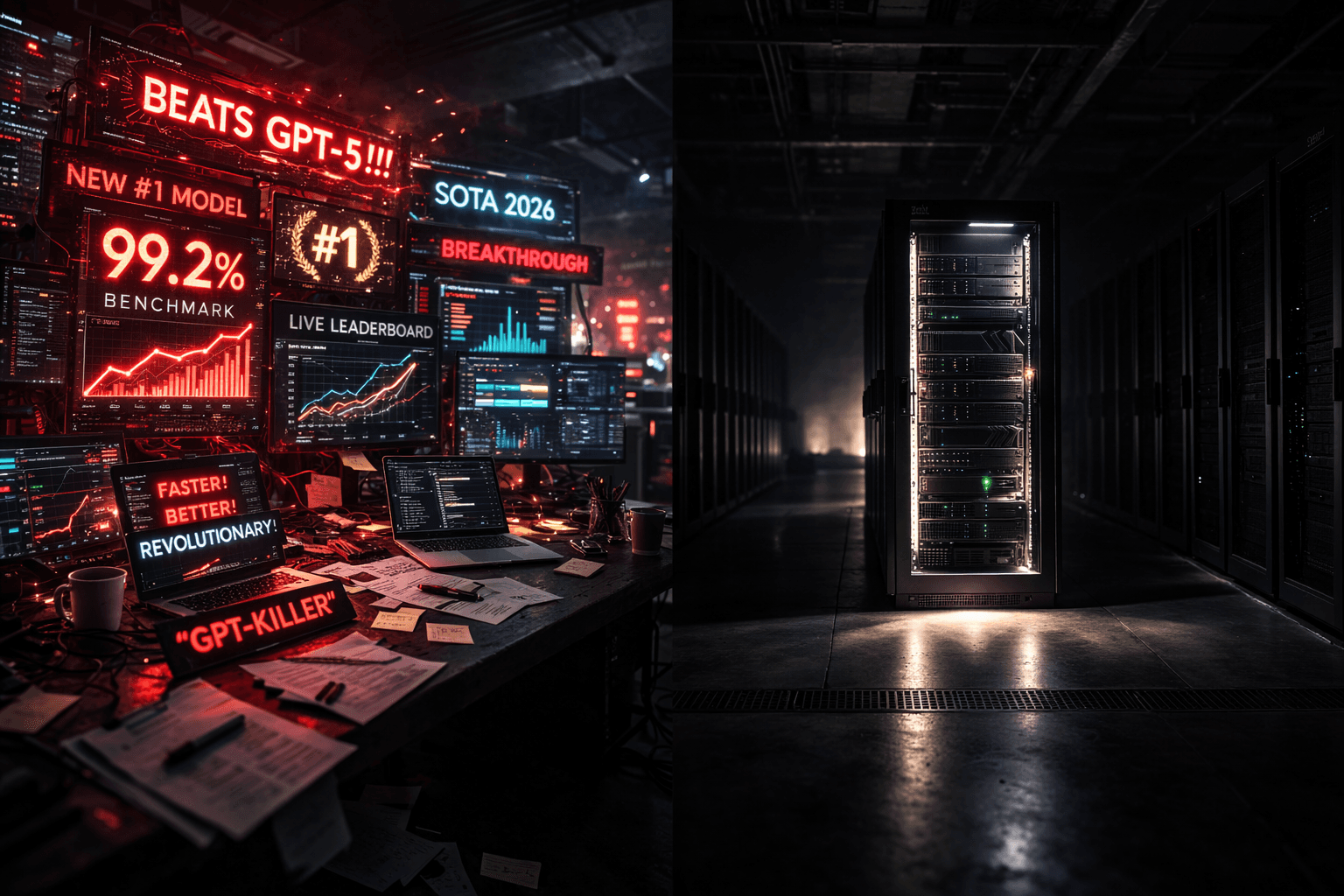

Because when you start looking into open-source AI models, it's a mess. There are dozens of them. New ones launch every week — sometimes multiple per week. Every single launch comes with a press release claiming it "matches GPT-5" or "beats Claude" on some benchmark you've never heard of. Everyone's the best at something.

And if you go looking for help, you'll find two kinds of content: technical benchmark tables that mean nothing unless you're a machine learning engineer, or "Top 10 AI Models in 2026" listicles that are outdated before you finish reading them.

Neither helps you actually decide. So here's how we actually thought about it.

The Landscape Is a Mess (And That's the Point)

Let me paint the picture. As of right now, here are just some of the open-source AI models you could choose from:

Qwen (from Alibaba), DeepSeek, Llama (from Meta), Mistral, Gemma (from Google), GLM (from Zhipu AI), Kimi (from Moonshot), and more. OpenAI — yes, OpenAI — even released their first open-source model recently.

Each of these comes in multiple sizes. Qwen alone has versions ranging from tiny models that run on a phone to massive ones that need a room full of hardware. DeepSeek has a similar range.

Multiply that across every model family and you're looking at hundreds of options.

Here's what makes it genuinely confusing: these models have gotten really good. The gap between the best open-source models and the best commercial ones like Claude and GPT-5 has collapsed to almost nothing. On paper. On benchmarks. They're practically equal.

And yet — only about 13% of AI workloads actually run on open-source models. That number dropped from 19% six months ago.

Think about that. The models are nearly as good. They're free. And fewer people are using them than before.

Why? Because benchmarks lie. Or rather, benchmarks don't tell the whole story. Choosing a model isn't about picking the one with the highest score on some test. It's about matching the right model to your actual situation — your use case, your hardware, your budget, your risk tolerance.

That's a selection problem. And selection is one of the things AI can't do for you.

The Trap Everyone Falls Into

Here's the mistake. Almost everyone, when they first look at open-source models, asks: "which one is the best?"

Wrong question.

The right question is: what's the smallest model that does the job?

I know. It's counterintuitive. We're conditioned to want the best. The most powerful. The highest-spec option. In most areas of business, premium makes sense — you want the best lawyer, the best accountant, the best hire.

With AI models, the logic flips. Bigger models are exponentially more expensive to run. They need dramatically more powerful hardware. They're slower. And for most everyday business tasks, they don't produce meaningfully better results than much smaller models.

I'll put it bluntly: you wouldn't rent a warehouse to store a filing cabinet. But that's what people do when they run the most powerful AI model available to draft a meeting recap.

The game isn't "how powerful can I get?" It's "how lean can I go while still getting the job done?" That shift in thinking changed everything for us.

How We Actually Decided

We had a clear use case: a private AI system for our small team, handling day-to-day tasks where we didn't want data leaving our infrastructure. Not cutting-edge research. Not complex multi-step analysis. Everyday work.

Given that, we looked at four things. Not benchmarks. These:

1. What are we actually using it for?

This determines everything. And you have to be brutal with yourself about it.

When we audited our actual AI usage across the team, the overwhelming majority fell into a few buckets: drafting and editing text, summarizing documents, answering questions about our own materials, basic code help, and sorting through information.

None of that requires the most powerful AI model on earth. Not even close.

Now look — if we were doing advanced research, building complex software, or running an AI-powered product where a wrong answer costs money or trust — completely different conversation. We'd need a bigger, more capable model and we'd pay for it.

But for internal team work? We needed "good enough." And we needed it fast and cheap. Being honest about that saved us tens of thousands of dollars.

2. What can we actually run?

This is where most guides lose non-technical people, so let me make it simple.

AI models come in different sizes, measured in "parameters" — basically, the number of things the model learned during training. More parameters generally means more capable, but also means more expensive hardware to run it.

Here's the practical version:

Small models (7–14 billion parameters): Run on a single AI chip. This is what fits in a $700/month cloud setup or a high-end desktop computer. Fast responses. Low cost. This is where we landed.

Medium models (30–70 billion parameters): Need beefier, pricier hardware. Cloud costs jump to $2,000–$5,000+ per month. Manageable for a mid-size company, but a real line item.

Large models (200+ billion parameters): The ones that go toe-to-toe with Claude and GPT-5 on benchmarks. They need clusters of the most powerful chips on the market. $15,000–$50,000+ per month. This is data center territory.

We were running a single mid-tier chip on Google Cloud. That put us squarely in the small model range. And honestly? Once I saw what small models could actually do, I stopped being jealous of the big ones.

3. Can we legally use this for business?

This one is wildly underappreciated. I'd bet most people picking an open-source model don't even check the license. Big mistake.

Not all "open-source" models are actually open for commercial use. The licensing landscape is a mess, and if you're building a business on top of one of these models, this stuff matters.

Let me break it down simply:

Fully open for business (lowest risk):

DeepSeek uses the MIT license — do literally whatever you want with it. Qwen and Mistral use Apache 2.0 — also very permissive, just slap on an attribution. OpenAI's new open model is Apache 2.0 too. These are your safe bets.

Open with strings attached (medium risk):

Meta's Llama has a "community license" that's mostly fine, but comes with limits. If your product hits 700 million monthly users, you need a special deal. You also can't use Llama's outputs to train competing models. For 99.9% of businesses this doesn't matter. But the strings are there. Know about them.

Open with a catch (higher risk):

Here's one that got my attention. Google's Gemma has a license that lets Google remotely restrict your usage if they decide you're violating their policies. Let me say that again.

They can pull the rug on your production system. I don't care how good the model is — I'm not building my business on something where someone else has a kill switch.

Not for business at all:

Some models are non-commercial only. Research and personal use. If you're running a company, don't even look at these.

We wanted the cleanest possible license. Zero strings. That narrowed our real options fast.

Want the full playbook? I wrote a free 350+ page book on building without VC.

Read the free book·Online, free

4. What does it actually cost?

Here's where it got concrete:

Qwen 14B (what we chose): ~$700–$1,200 per month on a single chip. This is what most small to mid-size teams need.

Qwen 32B (the step up): ~$1,900–$3,600 per month. Needs more powerful hardware. Noticeably more capable on complex tasks.

DeepSeek V3 (the one that competes with Claude and GPT-5): $20,000–$50,000 per month. Needs 8+ of the most expensive chips available.

When you lay it out like this, the decision becomes obvious. DeepSeek V3 is incredible — genuinely. It matches frontier models on most tests. But it costs 30–70x more to run than a small Qwen model. For our use case — internal team tasks — that premium buys us essentially nothing.

That's the math most people never do. They look at the benchmarks and say "DeepSeek is the best open-source model, let's use that." Then they see the infrastructure bill and either give up on self-hosting entirely, or spend way more than they need to.

Why We Went With Qwen

After all of that, we landed on Qwen3-14B. Simple as that. Here's the reasoning:

It fits our hardware.

One chip. No exotic setup. The thing just runs.

The license is clean.

Apache 2.0. Full commercial use. No one can remotely restrict it, revoke it, or change the terms on us.

There's a clear upgrade path.

This is one I almost overlooked. Qwen is now the most downloaded open-source AI model family in the world. It has versions from tiny to massive, which means if we outgrow the 14B, we step up to the 32B or the 72B without changing our whole infrastructure. Same family, bigger brain. That matters — you don't want to rebuild everything from scratch when you need more power.

It does the job.

For the 80% of tasks we throw at it — drafting, summarizing, sorting, answering questions — the quality is solid. Not "wow this is Claude Opus." But solid. Good enough that the team uses it daily without complaints.

The cost makes sense.

$700 a month for a private, sovereign AI system. No data leaves our servers. No third party reads our prompts. For what we get, that's a no-brainer.

Could we have gone bigger? Of course. Could we have run DeepSeek V3 and gotten responses closer to Claude quality? Absolutely. But at 30x the cost, for tasks that don't need that level of horsepower, that would have been a bad decision dressed up as a good one.

What Actually Surprised Us

A few things I genuinely didn't expect:

Small models are way better than their reputation.

I'll admit it — I went in thinking anything below the top tier would feel like a major downgrade. It doesn't. For routine work, the difference between a 14 billion parameter model and a 700 billion one is often invisible. The gap only shows on complex, multi-step stuff — which for us is maybe 20% of daily usage. I was wrong about this, and I'm glad I was.

The model actually thinks.

I expected basic Q&A to work, but I was skeptical about more structured tasks. Nope. The model reasons through problems, makes tool calls, processes multi-part requests. It feels much more capable than the size would suggest. This was the biggest surprise.

Benchmarks told us almost nothing useful.

Every model's marketing highlights the benchmarks where they win. Those numbers don't tell you how a model handles your work, on your hardware, with your team using it in their way. We tested a few options before settling on Qwen. The benchmarks would have predicted a different winner. Trust the test, not the score.

There are hidden costs.

The chip rental is the obvious expense. What's less obvious: the time spent configuring, tuning, testing, and monitoring. For our small team, it was around 10–15 hours of setup and testing total. Manageable. But if you're a larger company with more complex needs, budget for the engineering time on top of the infrastructure cost. It's real.

Where Small Models Honestly Fall Short

I want to be straight about this. The open-source AI space has too much hype and not enough honesty about what these models can't do.

Complex, multi-constraint problems.

Ask a small model to handle several requirements at once — "rewrite this while maintaining X, accounting for Y, and following Z" — and it will drop pieces. It forgets constraints. Bigger models are genuinely better at juggling.

Deep domain expertise.

If your questions go deep into niche territory — specialized legal analysis, complex financial modeling, advanced technical problems — small models give you surface-level generic answers. They don't have the depth. You'll feel the gap.

Long conversations.

Over extended back-and-forth, the model loses context. It starts contradicting what it said earlier or forgetting what you established three messages ago. Fine for quick tasks. Frustrating for longer working sessions.

Anything customer-facing or high-stakes.

If a wrong answer costs you money, trust, or a client — I would not rely on a small open-source model as the primary system. The error rate is higher. In high-stakes contexts, that gap between "95% accurate" and "99% accurate" is everything.

Our approach: small model for internal, low-stakes work. Claude or GPT-5 for anything complex, customer-facing, or where accuracy is critical. Route by difficulty. Use the right tool for the task. This isn't complicated, but it's the part most people skip.

A Simple Framework for Choosing

If you're thinking about deploying an open-source model — whether on the cloud or on your own hardware — here's how I'd approach it:

Step 1: Be honest about what you'll actually use it for.

Not the aspirational use case. The real daily usage. If it's mostly routine text work, a small model handles it. If it's complex analysis or customer-facing, think bigger.

Step 2: Know your hardware budget.

A single chip ($700–$1,200/month) handles small models well. Medium models need $2,000–$5,000/month. Frontier-competitive needs $20,000+. Or just buy the hardware and break even in months.

Step 3: Check the license.

I cannot stress this enough. Stick with MIT or Apache 2.0 — that means DeepSeek, Qwen, Mistral, or OpenAI's open model. Don't build your operations on something where someone else can change the terms or flip a switch.

Step 4: Test before you commit.

Run your real tasks through 2–3 candidates for a week. You'll know the winner faster than any comparison chart could tell you. Benchmarks are marketing. Your own testing is truth.

Step 5: Start smaller than you think you need.

You can always move up. If you outgrow the 14B, step up to 32B. Same model family, same infrastructure, more capability. Build the foundation once, scale the model later. Going the other way — starting too big and then trying to cut costs — is always messier.

What's Next For Us

We're running Qwen3-14B right now and it handles the job. But I'm curious — genuinely curious — about what the 32B could do. The jump reportedly makes a real difference on reasoning tasks, and the hardware cost is still within reach.

We're also looking at buying the actual hardware instead of renting cloud compute. The math says we'd break even in 5–6 months. After that, it's just electricity. I'll share what we find.

And the bigger picture here — every month, these open-source models get better. Fast. The gap between free and paid is shrinking. A year from now, the model we're running for $700 a month might be beaten by something you can run on a laptop for free.

The question isn't whether open-source AI is good enough for your business. For a lot of use cases, it already is. The question is whether you figure that out now — while you can build calmly and learn — or later, when the competitive pressure or the regulation forces your hand. And by then? You're already behind.

Own your AI, or be owned by whoever does.

-1754757174784.jpg&w=128&q=75)